Earlier this month I had the opportunity to participate in the VMware Exclusive Blogger Early Access Program. This is where VMware experts share news and upcoming features for products before the actual release date.

I would like to thank Pete Kohler and John Nicholson for the feature briefing on the release of vSAN 8 Update 2. I’d also like to thank Heather Haley from VMware’s global communications team and Corey Romero for helping to make it happen.

[This blogpost was under embargo until 22nd of August 5:00 AM PDT (14:00 CEST)]

VMware vSAN 8 Update 2 delivers a range of new features and improvements in flexibility, performance and ease of use.

New ESA Features at a glance

- vSAN ESA storage-only clusters with vSAN Max

- VMware Cloud Foundation Support for vSAN ESA in VCF 5.1

- vSAN file services now available on vSAN ESA

- New ESA Ready Node Profiles for small environments

- Support for a new class of read intensive storage devices

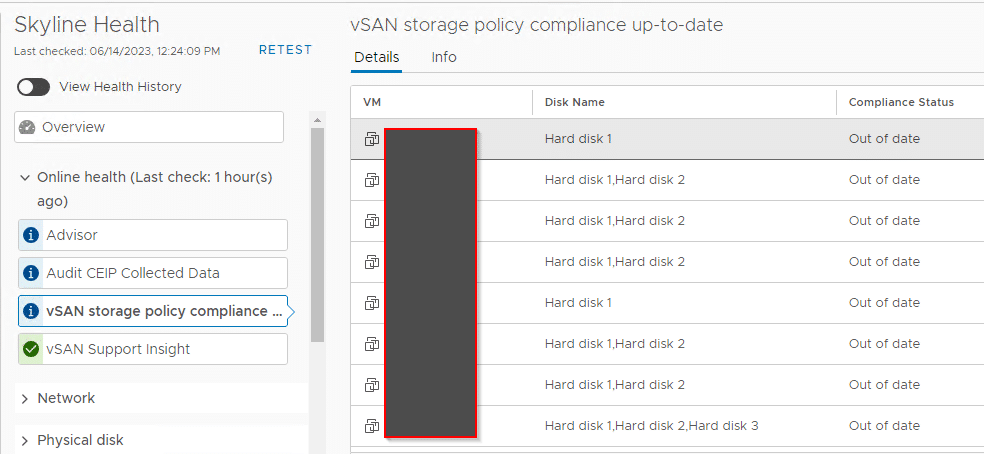

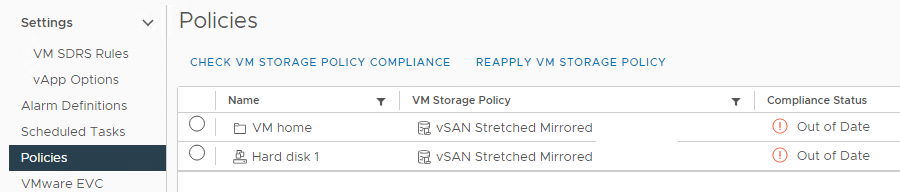

- Auto policy remediation

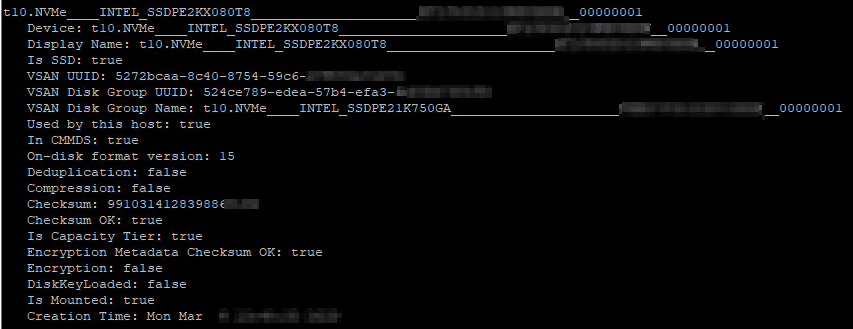

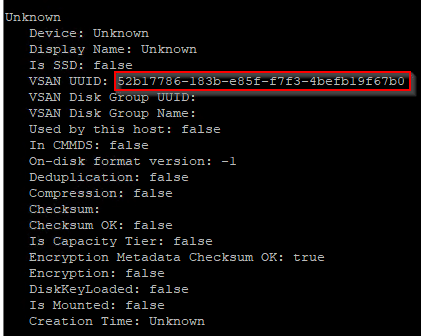

- ESA Prescriptive Device Claim

- Stretched- and 2-node-cluster impovements

- Up to 500 VMs per host (vs 200 before)