Patch build 16324942 for ESXi 7.0 has been released on June 23rd 2020. It will raise ESXi 7.0 GA to ESXi 7.0b. As usual I’m patching my homelab systems ASAP. As all hosts are fully compliant with HCL, I chose a fully automated cluster remediation by vSphere Lifecycle Manager (vLCM).

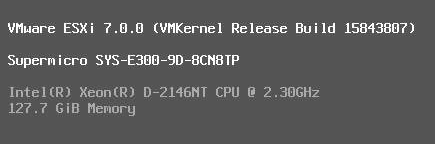

The specs

| Server | SuperMicro SYS-E300-9D-8CN8TP |

| BIOS | 1.3 |

| ESXi | 7.0 GA build 15843807 (before) / 7.0b build 16324942 (after) |

| HCL compliant | yes |

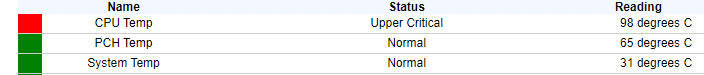

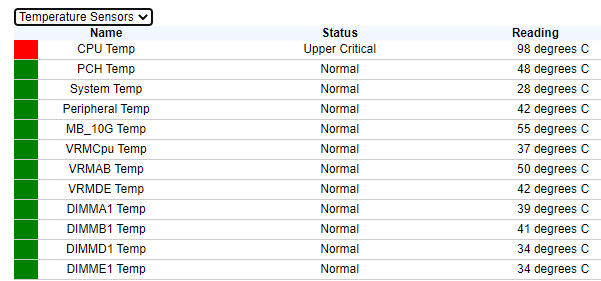

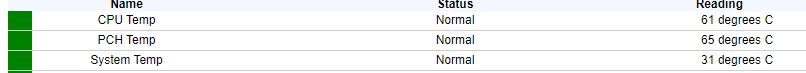

During host reboot I realized a temperature warning LED on the chassis. A look into IPMI revealed a critical CPU temperature state. Also the fans responsible for CPU airflow ran at maximum speed.

As you can see, system temperature was moderate and fans usually run at low to medium speed under these conditions. Air intake temperature was 25°C.

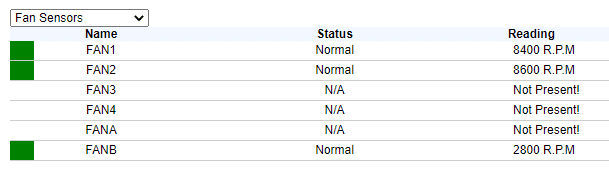

My ESXi nodes rebooted with the new build 16324942 and there were no errors in vLCM. But I could hear there’s somethin wrong. A fan running at speed over 8000 RPM will tell you there IS something to look after. Also the boot procedure took much longer than usual.

I quickly shut down the whole cluster in order to avoid a core meltdown.

First I checked if there is something obstructing the airflow, which could be the cause of overheating. But that wasn’t the case and it wouldn’t explain why 4 hosts show the same problem immediately after patching.

I’ve repated investigations of the thermal issue by watching the temperature increase during host boot. I let the host cool down to room temperature which was 25°C. While the host was in BIOS and power-on selftest (POST) CPU temperature was normal. While ESXi was loading drivers all sensors were still nominal. But once the black screen changed to the yellow-grey boot screen when VMkernel is loaded, CPU temperature jumped from around 60°C to a critical 98°C within a second. I could reproduce that on all hosts.

Is it Hardware or Software?

To rule out a hardware problem, I booted one host into an Ubuntu Live Linux. Temperatures didn’t rise. CPU Temperature started at 61°C and dropping to 52°C after 4 minutes. So we can rule out any hardware failures or bad settings.

The cluster used to work properly under vSphere 7 GA (build 15843807). So it’s worth a try to rollback to the older version.

Rollback to GA

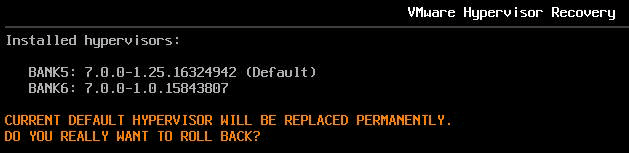

ESXi offers a recovery boot function. As soon as ESXi boot loader appears you have to press keys [Shift] + [R] and you’ll see a screen similar to the picure below.

You can see both installed versions. The new ESXi 7.0b is default on boot bank5 and there’s also the 7.0 GA version on boot bank6.

Confirm the rollback with [Y] and the system will boot next time with build 15843807.

The host came up with the build shown in the picture above and there was neither a warning indicator, nor did the fans run at full speed. A look into IPMI showed all sensors were nominal.

What can we say for now?

Well, we can show that there’s no hardware failure, because the system ran smoothly with an alternative boot image (Ubuntu 20.04 LTS) and it also showed no issues with the original ESXi 7.0 GA boot image.

We can also rule out a strange coincidence, because 4 hosts showed the same behavior right after patching.

Next steps

I will try to boot with the updated ESXi 7.0b image ISO. There are two of them:

| ESXi 7.0b – 16324942 | Security and Bugfix image |

| ESXi 7.0bs – 16321839 | Security only image |

We’ll see if the issue persists when booting directly from the image, or if there’s any difference between the security only image and the bugfix & security image.

Update July 5th 2020

I’ve posted my findings into our #vExpert Slack Channel. Either I’m completely wrong, or I cannot be the only one. Luckily I got support by my buddy Kev Johnson who confirmed the issue on one of two hosts after update.

Unfortunately I wasn’t able to test a direct boot with an updated ISO image (see table above), because I didn’t find these images in the downloads. If you know where to find them, please let me know and send me a link.

Update July 6th 2020

Meanwhile we have a discussion thread on VMTN.

Update July 17th 2020

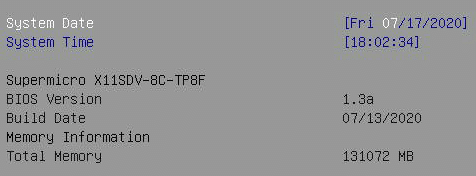

A big shoutout to my buddy Kev Johnson who opened a support ticket with SuperMicro. He has received an inofficial beta BIOS release 1.3a which looked promising. I also opened a support ticket with SuperMicro and referred to Kev’s issue. Soon after I received the beta BIOS too.

I upgraded BIOS on my first ESXi host from 1.3 to 1.3a. As you can see in the picture below, it’s brand new.

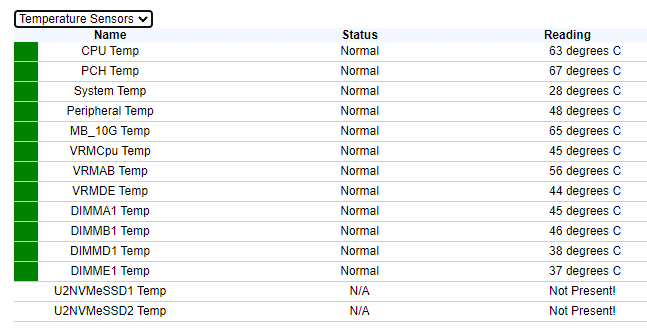

After I’ve monitored one host with the new BIOS for a while and nothing strange happened, I decided to flash all of my E300-9D host with the new BIOS. Then I fired up the vSAN cluster and applied latest ESXi patches. These patches used to cause thermal issues in combination with the old BIOS 1.3 but now with 1.3a CPU temperature remaind at a normal level.

The cluster operated several hours without any disturbance. We can assume that the BIOS update has fixed the problem.

How do these microcode updates work?

Is there a difference between microcode upgrades by vendor BIOS or OS patch? Or can they interfere with each other? Well, no. Basically the CPU has a versioning mechanism. During boot it makes sure to utilize the latest microcode version. If the BIOS microcode version is lower than the microcode offered by the OS, it will use the one from the OS patch. If not it’ll keep the microcode version from the BIOS.

If we go into the details of patch ESXi 7.0b we can see that it includes microcode updates for all kinds of CPU generations. My E300-9D servers are equiped with Intel Xeon D-2146 processors (Skylake). The VMware patch offers microcode version 0x02006901 for the D-2100 CPU series. The new beta BIOS from SuperMicro will flash microcode 0x02006906h which is higher. Therefore nothing happens during boot. The microcode patch included in the OS patch doesn’t get applied.

We just validated in our lab on Dell hardware and see no issues whatsoever. I can’t access the slack channel, so that makes it difficult to check any further.

Hello Duncan

It seems to be specific to the E300-9D and its Xeon-D. Meanwhile we’ve narrowed the issue to the microcode update.

There is a topic in VMTN now:

https://communities.vmware.com/thread/639122

Same issue with a Supermicro board X11SDV-8C-TP8F

Spent a lot of time debugging this to come to the same conclusion.

Update 16324942 messed something up.

Same issue with a Supermicro board X11SDV-8C-TP8F

So frustrated Supermicro’s product, is it the sensor’s issue? Have No way to resolve it so far. Looking for solutions..

Have you tried the latest BIOS?

Currently Supermicro offers an official BIOS 1.4.